Corpora, Methods, and Challenges

-- Report on the Leiden Conference on Digital Research in East Asia Studies

From July 10 to 12, 2016, the conference “Digital Research in East Asia Studies: Corpora, Methods, and Challenges” was held at Leiden University. Over twenty DH scholars and computer scientists from Europe, US, Japan and Taiwan discussed ways to create and curate digital corpora of East Asian texts, apply digital methods to explore them in research across the humanities disciplines, and address the remaining challenges for DH to play a greater role in East Asian studies. The conference covered a set of topics relevant to the entire process of digital research, moving from the curation of text corpora and OCR, named entity recognition and machine learning for data extraction, text analysis with Python and topic modeling, to spatial and network analysis, and finally to a discussion of how to best develop a research infrastructure for East Asian Studies.

In the first panel, “OCR for Chinese Documents,” Donald Sturgeon, Postdoctoral Fellow at the Fairbank Center for Chinese Studies of Harvard University and creator of the Chinese Text Project digital library, and Huang Chien-kang, Associate Professor in the Department of Engineering Science and Ocean Engineering at National Taiwan University, presented two ways to improve OCR accuracy for Classical Chinese texts. Building on knowledge extracted from the Chinese Text Project database, Sturgeon demonstrated a practical OCR procedure capable of obtaining an accuracy of over 92% when given knowledge about a specific text. This procedure has already been applied to over 20 million pages of pre-modern Chinese, and the results made available online through the Chinese Text Project. For the same purpose, Huang and his colleagues, applied a different method: they developed a character segmentation algorithm which helped deliver better OCR results than working on the whole page. Supplemented with contextual analysis, this allowed them to increase accuracy to 70% in their work on Buddhist canons.

In the second panel, “Keywords and Concepts in the Digital Humanities,” Miao Shengfa, Post-doctoral Researcher at Leiden Institute of Advanced Computer Science, and Liu Chao-lin, Distinguished Professor at the Department of Computer Science, National Chengchi University, presented two applications of Named Entity Recognition (NER) methods in processing Classical Chinese texts. Miao used machine learning to automatically tag entities like person and place names and helped build a learning module in the text analysis platform MARKUS designed by Brent Ho and Hilde De Weerdt to improve recall and accuracy of texts marked up with its tagging module. Having a large set of texts, Miao pointed out, will deliver better results. Liu and his colleagues also applied machine learning in their work on local gazetteers (difangzhi), a body of texts for which there is no parser yet, in two ways: (1) using information in Chinese Biographical Database (CBDB) to annotate and learn syntactic patterns, and (2) training classification models of conditional random fields – a mathematical approach in machine learning – to recognize person and place names. While Miao and Liu explored the NER tasks from different perspectives, i.e., incremental and batch learning, their research demonstrated that with these methods data can be extracted from large corpora of texts very efficiently.

In the third panel, “Spatial Analysis of Biographies and Novels,” Wen Xin, PhD Candidate in Inner Asian and Altaic Studies at Harvard University, and Margaret Wan, Associate Professor of World Languages and Cultures at the University of Utah, presented how they investigated the spatial attributes of two genres of texts with digital methods. On the basis of a mapping of three early Tang datasets – the more than 13,000 prefects (cishi), the seven hundred military garrisons (fubing) and the home-places of owners of early Tang epitaphs in CBDB – with China Historical GIS, Wen argued that the greatest number of early Tang social elites initially resided and worked in a triangular area formed by the three capitals (Chang’an, Luoyang, and Taiyuan), but gradually shifted to populating the corridor region between Chang’an and Luoyang. Using MARKUS to mark up place names in large numbers of unexplored lesser-known Chinese novels, Wan sought to create an overview of space in the Chinese novel. MARKUS’s ability to tag place names in a large corpus and visualize them on maps allowed her to address such larger questions as whether genre has a spatial attribute, and detect the rising importance of the capital in the Chinese novel, a trend she found opposite to that in European novels.

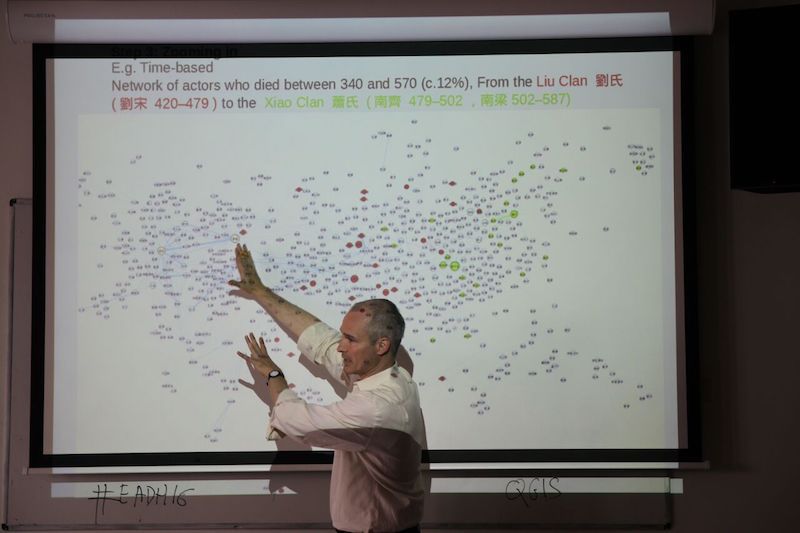

In the fourth panel, “Examining Influence and Collective Action with Social Network Analysis (SNA),” Hilde De Weerdt, Professor of Chinese History in the Leiden University Institute for Area Studies, and Marcus Bingenheimer, Assistant Professor in the Department of Religion of Temple University, illustrated how DH can help raise new questions in historical research. Through network analyses of co-occurrence of the dozens (and in one case hundreds) of names mentioned on the three faction lists in the twelfth century in thousands of Song prose texts, De Weerdt’s work is moving beyond the select case studies that previous scholars have conducted and holds out the promise that we can begin to explore how these lists came into being by taking advantage of the large corpus of contemporary prose texts. Moreover, the cluster analysis and visualization of these co-occurrences suggested unexpected connections among people usually not known to have been closely associated. In this way these experiments raise additional questions that demand further inquiry. Similarly, applying social network analysis to the personal names appearing in the four major Biographies of Eminent Monks (Gaoseng zhuan) of medieval China, Bingenheimer was able to trace their relationships to a great degree of detail. For the period of 400-550 CE, the visualizations illustrate how the different ruling houses of the Liu Song, the Southern Qi and the Liang dynasties patronized Buddhism. A comparative visualization of degree and betweenness centrality reveals the influence of Chan lineage discourse on later Buddhist historiography. While degree centrality is highest for monastics of earlier periods, Chan figures after the mid-Tang have the highest betweenness centrality in the network.

In the fifth panel, “Detecting Clusters and Topics in Chinese Text Corpora,” Chu Ping-tzu, Associate Professor in the Department of Chinese Literature at National Tsing Hua University, Paul Vierthaler, Digital Humanities Postdoctoral Fellow and Visiting Assistant Professor in the History Department at Boston College, and Ian Miller, Assistant Professor of History at St. John’s University, demonstrated three different ways to read and interpret texts digitally. Aiming to explore the temporal aspect of texts that would help enrich our understanding of them, Chu used MARKUS to investigate a variety of temporal expressions in a comparative study of two cases: Li Xinchuan’s Jianyan yilai chaoye zaji 建炎以來朝野雜記 and Ye Shaoweng’s Sichao wenjian lu 四朝聞見錄. This work-in-progress is leading to a portrait of their timelines, by using HuTime, that lays out the major events, the most important figures, and the most discussed time periods during the first four reigns of the Southern Song. Eventually, Chu hopes that by mapping temporal expressions and converting them to Western calendar years automatically, it will be possible to compare the temporal plots in different texts. Using linear algebraic and machine-learning algorithms like Principal Component Analysis (PCA) and t-distributed stochastic neighbor embedding (t-SNE) to analyze late imperial texts, Vierthaler offered a comparative analysis of two methods that can be used to explore the nature of late imperial stylistics. Vierthaler broke texts into character frequency lists and showed that principal component analysis of these lists reveal stylistic clusters that correspond directly with genre, like standard histories versus novels. T-SNE, on the other hand, provides a much more complicated and difficult-to-analyze picture. Applying topic-modeling to the large corpus of Qing Vertical Records 清實錄 (Qing Shilu), Miller used Latent Dirichlet Algorithm (LDA, which views each document as a mixture of various topics) to approximate long-term trends in the topics discussed therein. This allowed him to identify three types of trends: (1) topics with small variation around a baseline (such as “crime”), (2) secular changes in topic composition (including the instance of Han and non-Han names), and (3) topics dominated by extreme events (including “rebellion”). While most of them confirm what historians already know, with regard to his ongoing work using semi-supervised classifier training to improve the accuracy of document-level topic identification, Miller thinks it could help identify the precursors to large-scale historical events and factors that correlate with secular change.

In the sixth panel, “Analyzing Rhythm and Genre,” Liao Shueh-ying, PhD Candidate in the Historical and Philological Sciences Department of École Pratique des Hautes Études, and Liu Chen, PhD Candidate in the East Asian Languages and Civilizations Department of Harvard University, presented two approaches to the digital analysis of rhythm and genre. Liao traced rhythmic phenomena in the Book of Odes 詩經 (Shijing) and asked how non-semantic connections between two verses were established by rhythms. He proposed a new way of reading – by focusing on syntactic formula and other repetitive elements rather than on semantic analysis – that according to him allows evaluation of style distance between authors, anthologies, dynasties and regions. This also offers a new perspective to tackle some enigmatic literary phenomena like the connection between verses produced with the “incentive process” 興 (xing). Inquiring into the rise of the “letterlet” 尺牘 (chidu) genre in the Northern Song, Liu proposed another way to conduct genre analysis digitally. Different from Wan’s approach, Liu combined close reading of the leading literatus Su Shi’s letterlets with a digital analysis of topics, word frequency, and N-grams, and compared them with both contemporary letters 書 (shu) and pre-Song epistolary literature, to investigate whether the letterlets were stylistically distinct from the letters.

In the seventh panel, “Modeling and Simulating Korean History,” Kim Baro, PhD Candidate and University Lecturer at The Academy of Korean Studies, and Javier Cha, Postdoctoral Fellow in the School of Modern Languages and Cultures, the University of Hong Kong, introduced the application of DH in the study of Korean history. Kim introduced the work that has been done in digitizing historical records in Korea in the past half century and presented his ongoing work, inspired by CBDB and MARKUS, in building an integrated historical keyword system for reconstructing Korean historical data. Integrating computing into inquiries in intellectual history, Cha investigated changes in the marriage patterns of the Korean yangban aristocracy from 1100-1400 with a social network analysis of the kinship data drawn from three sources: the roster of 5,000 civil service examination degree holders, 12,000 nodes representing their kinship network, and an estimated 7 million characters of prose extracted from 200 collected works. Examining the social network of the elites in the aggregate allows him to interrogate the connection between the ideational and social aspects in their lives.

In the eighth panel, “Digital Platforms for Buddhist Studies,” Joey Hung, Associate Professor at the Dharma Drum Institute of Liberal Arts, and Christian Wittern, Professor in the Center for Informatics in East-Asian Studies, Institute for Research in Humanities, Kyoto University, introduced two digital platforms dedicated to facilitating the study of Buddhist literature. Huang and his colleagues at the Dharma Drum Institute have been developing an integrated content-rich platform, which not only contains reference materials and the latest research findings, but also provides tools for editing and annotating texts and for conducting quantitative analysis. Building on over two decades of experience with digital texts, Wittern led the development of Kanseki Repository, an online text archive featuring a large compilation of premodern Chinese texts collected and curated using firm philological principles. One of its unique features is that the texts can be accessed, edited, annotated and shared not only through a website, but also through a specialized text editor. This thus transforms it into a powerful workspace for reading, studying, and translating Chinese texts. The texts can also be downloaded and used completely independently of either system.

In the ninth panel, “Developing Research Infrastructure across East Asian Studies,” Jeff Tharsen, Digital Humanities Research and Computing Specialist in the University of Chicago Research Computing Center, and Peter Broadwell, Academic Project Developer in the University of California, Los Angeles Digital Library Program, presented two macroscopic perspectives on infrastructural development for DH in East Asian studies. Tharsen demoed the powerful Digital EDOC platform he designed, and proposed a few basic strategies for designing data architecture and computational tools to more fully exploit their untapped potential. He showed, for example, how any dictionary or lexicon can be turned into a database. While pointing out the need to tailor these strategies to each specific use, Tharsen also noted that when designed and applied with care, computational approaches can lead to striking new insights into both the texts themselves and their intellectual context. Broadwell introduced the East Asian Studies Macroscope (EASM) project at UCLA and the results of several pilot studies that applied corpus-wide textual analysis to large digitized collections of poetry from the Tang Dynasty and Heian period Japan. These examples served to highlight the key infrastructural components needed to support computational access to multiple large-scale corpora stored at different locations. They also showed how scholarly collaboration on novel macroscopic analyses such as subcorpus topic modeling can be facilitated.

Each day, following the panels, there was also a discussion session led by a leading DH scholar on larger issues concerning the DH field as a whole.

During the first day’s discussion, “Incorporating Digital Humanities into the University,” Hsiang Jieh, Distinguished Professor in the Department of Computer Science and Information Engineering, National Taiwan University, raised the question of whether DH is a discipline, and if not, how to effectively teach DH to humanities students. Taiwan is planning a 4-year special program on DH as a collaboration between different universities starting in 2018. It is hoped that over time the curriculum would be modified to equip humanities students with “computational thinking” and coding.

During the second day’s discussion, “The Digital and the Disciplinary: Looking for Interpretive Synergies,” Michael Fuller, Professor in the Department of East Asian Languages & Literature, University of California, Irvine, and the lead designer of CBDB, pointed out the crucial importance of the question raised by De Weerdt on bridging the gap between macro-, meso-, and micro-level analyses. The answers, Fuller suggested, would vary across disciplines as they tend to employ different DH techniques. Fuller also raised the question of semantic agnosticism: DH scholars tend to shift the analysis of meaning from the level of texts to that of characters, words, or topics. Participants also discussed the implication of doing DH for placement on the job market. Opinions varied as to whether this is a plus or a minus.

During the last day’s discussion – “Collaboration in the E-Humanities”, Peter Bol, Professor of East Asian Languages and Civilizations at Harvard University, Chair of the Steering Committee for CBDB, and Director of the Center for Geographic Analysis, joined from Beijing via Skype. He raised the issue of financial sustainability for DH projects. He also pointed out the need to collaborate with external partners, especially librarians and publishers.

The diverse backgrounds and large numbers of student and staff participants at the conference suggest that digital research is no longer the concern of a few professionals but has become mainstream. This first conference in East Asian Digital Humanities in Europe provided a venue for showcasing work on various aspects of the digital research process as applied to East Asian languages. It also became a starting point for collaboration on the challenges that remain in areas ranging from cross-platform data collection, improving OCR and named entity recognition, text mining, machine learning, data analysis and visualization, and data sharing and publishing.

Recent blog posts

International Medieval Congress 2015 by mchu, July 30, 2015, 3:11 p.m.

Team members Hilde De Weerdt, Chu Mingkin and Julius Morche contributed to the panel “Historical Knowledge Networks in Global Perspective” ......read more

MARKUS update and new tools by hweerdt, March 12, 2015, 6:38 a.m.

The MARKUS tagging and reading platform has gone through a major update. New features are ......read more

Away day for the "State and society network" at LIAS by mchu, Dec. 5, 2014, 12:40 p.m.

Team members Hilde De Weerdt, Julius Morche and Chu Ming-kin participated in the Away Day of the “state and society ......read more

See all blog posts

Recent Tweets

Recent Tweets

-

@Hilde De Weerdt

1193 copy of al-Istakhrı's 10th C world #map, a maritime view of Afro-Eurasia as a world connected by seas--annotat… https://t.co/mZlZSIC0C41 year, 7 months ago

@Hilde De Weerdt

1193 copy of al-Istakhrı's 10th C world #map, a maritime view of Afro-Eurasia as a world connected by seas--annotat… https://t.co/mZlZSIC0C41 year, 7 months ago -

@Monica H Green

A reminder that all the essays in the 2014 volume, *Pandemic Disease in the Medieval World: Rethinking the Black De… https://t.co/RntQ3Gw0On1 year, 7 months ago

@Monica H Green

A reminder that all the essays in the 2014 volume, *Pandemic Disease in the Medieval World: Rethinking the Black De… https://t.co/RntQ3Gw0On1 year, 7 months ago -

@Journal for the History of

Knowledge

We are pleased to announce the theme of the new @jhokjournal special issue: 'Histories of Ignorance', with guest ed… https://t.co/5RRYoEsxoe1 year, 7 months ago

@Journal for the History of

Knowledge

We are pleased to announce the theme of the new @jhokjournal special issue: 'Histories of Ignorance', with guest ed… https://t.co/5RRYoEsxoe1 year, 7 months ago -

@Hilde De Weerdt

CFP: Between Asia and Europe: Whither Comparative Cultural Studies? University of Ljubljana, May 2020 https://t.co/eyaWwNprEd1 year, 8 months ago

@Hilde De Weerdt

CFP: Between Asia and Europe: Whither Comparative Cultural Studies? University of Ljubljana, May 2020 https://t.co/eyaWwNprEd1 year, 8 months ago -

@Craig Clunas 柯律格

Honoured to join the editorial board of "The Court Historian" as an index of the journal's wish to publish more stu… https://t.co/dgxW1hIYQ41 year, 8 months ago

@Craig Clunas 柯律格

Honoured to join the editorial board of "The Court Historian" as an index of the journal's wish to publish more stu… https://t.co/dgxW1hIYQ41 year, 8 months ago -

@Global History of Empires

"And yet there is so much more to African history than stale narratives of slavery and colonialism." https://t.co/F8M0KTgIsL1 year, 8 months ago

@Global History of Empires

"And yet there is so much more to African history than stale narratives of slavery and colonialism." https://t.co/F8M0KTgIsL1 year, 8 months ago